This launch wants to put your brain-computer interface in the Apple Vision Pro

Now, Kognixion brings its and communication app on Vision Pro, which Forsland says that there is more functionality than the purpose built-in Axon-R. “Vision Pro gives all your apps, App Store, everything you want to do,” he says.

Apple has opened the door to integrating May, when announced a new protocol to allow users to serious disabilities for mobility to control iPhone, iPad and Vision Pro without physical movement. Another company BCI, Synchron, whose implant is inserted into a bloodship next to the brain, also integrated its system with the Vision Pro. (Apple is not known to develop own BCI.)

At the trial of the Kognixion, the company has replaced Apple’s headband, which is incorporated with six electroencephalographic, or EEG, sensors. They collect information from the visual and parietary cortex of the brain, which are located on the back of the head. In particular, the Cognixion system identifies visual fixation signals, which occur when a person maintains a view of the facility. This allows users to choose from the menu option in the interface only using mental attention. Neural computer package carried in the side of the data processes outside the vision of the pro.

“The philosophy of our approach is about reducing the amount of cargo generated in the person’s communication needs,” says Chris Ullrich, Chief Officer Kognixion.

Current communication tools can help but are not ideal. For example, low-technological handbound plates allow patients to look at certain letters, words or images so the caretaker can guess their meaning, but those who consume. And eye tracking technology is still expensive and is not always reliable.

“We are actually building and for each individual participant who is adapted to their history, their style of their humor, what we can gather in something that is okay what is a user proxy”, it is said in something that is user proxy “, it is said in something that is user Proxy, “he says in the name of the user,” he is said in something that is user proxy “, it is said in something that is user proxy”, it is said in something that is user proxy.

Started used AI to make a psychedelic without travel

While there is a growth Evidence that psychedelic medications can effectively treat mental health conditions, especially in cases where traditional treatments failed, they continue to come with minorities.

Their hallucinogenic effects can be intimidating and irresistible, and dosing sessions last several hours. A good treatment rely heavily in the way of thinking of an individual who goes into a session and the environment in which they received it. And although it is rare, psychedelics can sometimes worsen the existing mental illness.

Mindstate Design Laboratories is one of the slate of new companies that aim to make more safer psychedelike by removing the classic “travel” associated with them. The company uses AI to help design psychedelic medications that cause specific mental conditions without hallucinations, and its first layer looks promising.

“We have created the least psychedelic-psychedelic that is psychoactive,” says the Director General Dillan Dinardo. “That’s pretty psychoactive, but there’s no hallucinations.”

It was founded in 2021. and supported the Y Combinator and the founders of Openai, Neurilink, InstaCart, coinbase, and a set of models with various psychic drugs in more than 70,000 “reports” from the official media, red, and even dark internet.

Platform analysis How psychedelics produce different effects to drafting the first drug candidate, MSD-001, ownership oral Formulation of 5-MEO-MIPTA, also known as the Moxy street. At the trial phase divided by wired, the drug was safe and well tolerated into five different dose in 47 healthy participants. He also produced psychoactive effects without causing the flexibility of the mind, which the company says is the validity of his AI platform.

While the participants reported enhanced emotions, associative thinking, improved imagination and perceptions like colors look lighter, they did not experience hallucinations, independent disintegration, ocean boundless travel and other typical characteristics of psychedelic travel.

The company measures the effects of medicines with valid scales used in psychedelic research and requested participants in subjective issues such as “Are you happy?” And “are you sad?” The researchers also noticed the stability of the eyes of volunteers and performed brain recording before, during and after psychoactive effects. Using this information about the imagination of the brain, the company managed to determine that the medicine produced many of the same brain-related brain forms and other first-generation psychodelial. “The medicine enters the brain and do what we intend to do,” Dinardo says.

Psychoactive effects have started within about 30 minutes after participants took medicine, with peak intensity that occur about an hour and a half to two hours. The company does not report serious harmful events.

The trial that happened in the Human Drug Research Center in the Netherlands included a combination of individuals who tried psychedelike in the past and others who did not have.

Mindstate’s approach is based on the idea that the psychedelic “excursion” may not be needed for therapeutic benefits. Psychedes work on serotonin brain promoting neuroplasticity, which includes growth of neurons and form new ties. Some researchers believe that this ability to encourage neuroplasticity, not hallucinogenic effects the key to the treatment of mental illness.

And psychosis is at all rare psychosis

New trend appears in psychiatric hospitals. People in the crisis come with fake, sometimes dangerous beliefs, grandiose misconceptions and paranoid thoughts. The shared thread connects them: Marathon talks with AI Chatbots.

The wired spoke with more than a dozen psychiatrists and researchers, who are increasingly worried. In San Francisco, the Keith Sakat Psychiatrist says that he counted on a dozen cases sufficiently serious to strengthen hospitalization this year, cases in which artificial intelligence “played a significant role in their psychotic episodes.” As this situation takes place, the capture definition: “Ai psychosis” is taken into the headlines.

Some patients insist that the bots are a trademark or turning new great theories of physics. Other doctors say patients are locked in days back with tools, who come to hospital with thousands of pages of rewriting, in detail the bots supported or strengthened obviously problematic thoughts.

Reports like these are the accumulation, and the consequences are brutal. Incorrect users and family and friends described spirals that led to lost jobs, smoked relationships, forced receptive hospitals, prison weather, and even death. However, clinicians say that the wired medical community is divided. Is that a distinct phenomenon that deserves your own label or a familiar problem with a modern trigger?

And psychosis is not recognized by the clinical label. However, the phrase spreads in news and social media as a decisite for catching a kind of mental health crisis after a long chatbot conversation. Even the industry leaders are invited to discuss many mental health problems that are connected to AI. In Microsoft Mustafa Suleyman, the director and the Tech Giant AI division, warned the “risk of psychosis” for the post last month. Sakata says that the pragmatic and uses the phrase with people who are already working. “It is useful as an abbreviation for discussion on the actual appearance,” says a psychiatrist. However, it is quick to add that the term “may be misleading” and “risk concluding complex psychiatric symptoms”.

It is too simplification of what is concerned with many psychiatrists starting with the problem.

Psychosis is characterized as a departure from reality. In clinical practice, this is not a disease, but a complex “symptoms, including halucinations, thoughts, and cognitive difficulties,” says James Maccabe, a professor at the Department of Study at King’s College London. It is often associated with health conditions such as schizophrenia and bipolar disorder, although episodes can be initiated with a wide range of factors, including extreme stress, substance and denial use.

But according to Maccabe, cases and psychosis are almost exclusively focused on misconceptions – strongly held, but false beliefs that cannot be shaken by contradictory evidence. Although some cases can meet the criteria for a psychotic episode, Maccabe says that “there is no evidence” that AI has an impact on other characteristics of psychosis. “It’s just misconceptions that they affect their interaction with AI.” Other patients who report mental health problems after switching on chatbots, maccular notes, misconceptions, without any other features of psychosis, a condition called delusion.

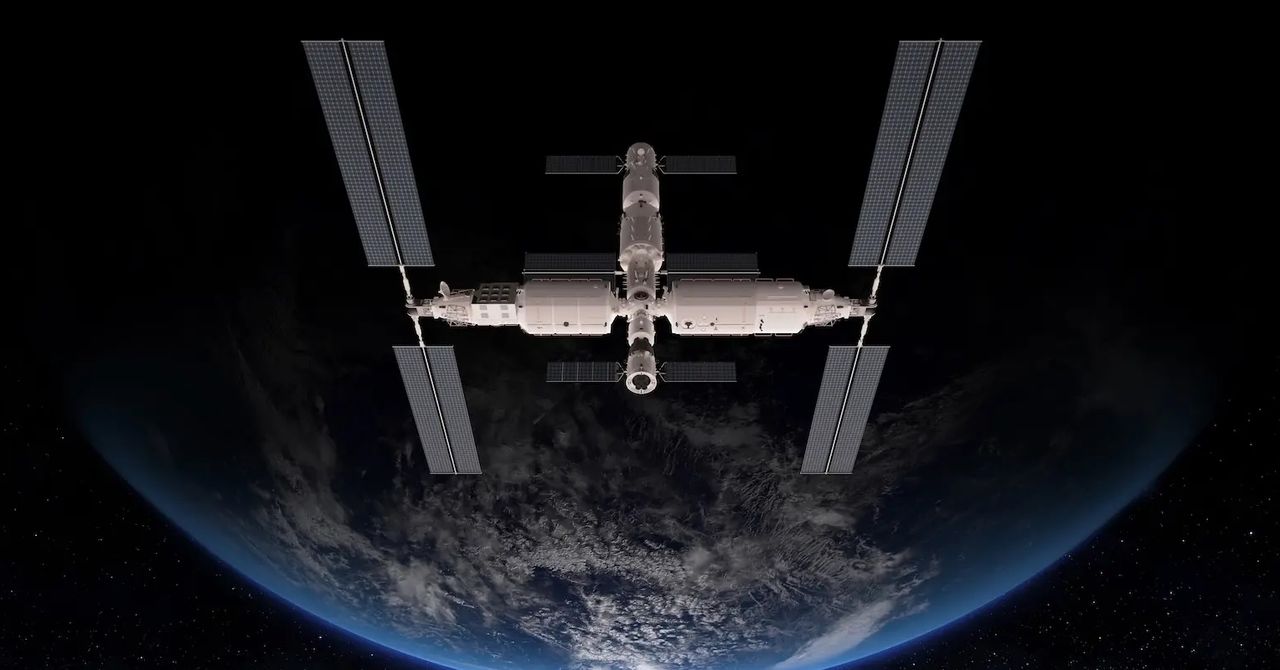

Large technologies dream of putting data in space

For one thing, the systems imagines that the process data is relatively slow compared to those on the territory. They would constantly bombing them with radiation, and “obsolescence would be a problem” because repairs or upgrades would be confused hard. Hajimiri believes that data centers in space could, one day, be a sustainable solution, but hesitant to say when it can come on that day. “It would definitely be feasible in a few years,” he said. “The question is how effective they would be and how much it would be profitable.”

The idea of simply putting data in the orbit is not limited to emergency networks of technicians or deeper thought of academics. Even some are elected officials in cities in which companies like Amazon hope build data centers to build a point. Tucson, Arizona, Nikki Lee Hall is poetically about his potential during the August’s hearing, in which the Council unanimously voted the proposed data center in his city.

“Many people say data centers don’t belong to the desert,” Lee said. But “if it is really a national priority,” then the focus must be in the “putting dollars and development dollars in the data centers that will exist in the universe. And that may sound wild and a little scientific fiction.”

That is true, but that happens in an experimental level, not industrial. Starting called StarCloud hopes to launch the cooler size satellite several nvidia chips in August, but the launch date is pushed back. Lonestar data systems have landed the miniature data center, transmitting valuable information such as imaginary dragons of the song, on the moon a few months ago, although the land rolled over and died in an attempt. More such startups are planned for the following months. But “it is very difficult to predict how fast this idea will become economically feasible,” said Matthew Weinzierl, an economist from Harvard studying market forces in space. “Space based data centers can also have some niches used, such as processing data based on space and providing opportunities for national security,” he said. “Being a meaningful rival to earth centers, however, will need to compete for costs and services as well as everything else.”

For now, it is much more expensive to put the data center in space than it is putting in, tell, Virginia’s Data Center Valley, where mighty demand could double in the next decade if unregulated. And as long as the stay on Earth remains cheaper, the profit motivated companies will favorize the partial expansion of the data center.

However, there is one factor that could encourage Openai and others to look at the sky: there is not much regulation up. The construction of land data on Earth requires municipal licenses, and companies can be tense local governments whose inhabitants are worried that data development can break their water, raise their planet or overheat your planet. In the universe, there is no appeal neighbors, Michelle Hanlon, a political scientist, and a lawyer leading the Center for Air and Space Law at the University of Mississippi. “If you are an American company looking to put data centers in space, then before it is better, before the congress is like” Oh, we have to regulate it. “

Distillation can make and models smaller and cheaper

Original version from This story appeared in How many magazines.

Chinese and the Deepseek company announced Chatbot earlier this year called R1, which pulled huge attention. Most focused on the fact that the relatively small and unknown company said that it was built by Chatbot who has monitored the effects of those from the world’s most famous companies and the use of partnerships and expenses. As a result, the stocks of many Western technological companies fell; Nvidia, which sells chips that run the leading AI models, lost more stock values in one day than any company in history.

Some of the attention involved the accusation element. Sources are alleged that Deepseek received, without permission, knowledge from own models O1 O1 using the technique known as distillation. Much of the news frame this opportunity as a shock industry and, which implies that Deepseek revealed a new, more efficient way to build ai.

But the distillation, called knowledge, is a widely used tool in AI, the topic of computer science research that returns to a decade and a tool that is used by large technical companies on their own models. “Distillation is one of the most important tools that companies today have models to make more efficiently,” said Enric Boix-Adsera, researcher who studying distillation at the School of Wharton University in Pennsylvania.

Dark knowledge

The idea of distillation began with paper for 2015. year by three researchers on Google, including Geoffrey Hinton, the so-called Kum AI and 2024 Nobel Laureata. Then the researchers often run the Ensemble models – “Many models are glued together,” said Oriol Vinyals, the main scientist on Google Deepmind and one of the authors of paper – to improve their effect. “But it was incredibly awkward and expensive to start all models in parallel,” Vinyals said. “We intrigued with the idea of distilling to give it to one model.”

Researchers thought I could make progress solving a significant weak point in machine learning algorithms: wrong answers were considered as bad, no matter how wrong it might be. As part of the image classification, for example, “confusing dog with a fox penalized in the same way as a confusing dog with pizza,” Vinyals said. The researchers suspected that the Ensemble Models contain information that the wrong answers were less bad than others. Perhaps a smaller model “Student” could use data from the Great “Model” to understand the categories faster, which should have sorted images in. Hinton called this “dark knowledge”, referring to the analogy of cosmological dark matter.

After discussing this possibility with Hinton, Vinyals has developed a way to get a large teacher model to transfer more information on the image categories to a smaller student model. The key is in the household in “soft goals” in the teacher model – where the probabilities are assigned for each possibility, not solid answers. One model, for example, calculated that there is 30 percent of the chance that the picture showed a dog, 20 percent to showed the cat, 5 percent to showed a cow and 0.5 percent to show the car. By using these probabilities, the teacher model effectively discovered the student that dogs are quite similar to cats, not so different from cows and quite different from cars. The researchers found that this information would help the student learn how to recognize images of dogs, cats, cows and cars more efficiently. A large, complicated model could reduce on a skinny one with barely any loss of accuracy.

Explosive growth

The idea was not the current hit. The work was rejected from the conference, and Vinyals, discouraged, turn to other topics. But Distillation arrived in an important moment. Arms, engineers have discovered that larger data on training were fed into neural networks, the more efficiently became these networks. The size of the model soon exploded, as well as their possibilities, but the costs of leaders who climbed into step with their size.

Many researchers turned into distillation as a way to make smaller models. Google Researchers in 2018. years presented a powerful language model called Bert, which company soon began to use to help search web search. But Bert was great and expensive to run, so the next year, other developers distilled a minor version reasonably named Distilbert, who became a job and research. Distillation gradually became ubiquitous, and is now being offered as a company service such as Google, Openai and Amazon. Original distillation paper, which is still published only on the Arxiv.org Pretprint server, is now listed more than 25,000 times.

Given that distillation requires access to the interior of the teaching model, it is not possible for the third party to be annoyed in order to begin data from the closed code model such as O1 O1, because they were considered O1, because O1 O1 was considered. This was said, the student model could continue to learn much from the teacher model only through the encouragement of teachers with certain issues and the use of responses for trained models – almost Socratic access to distillation.

Meanwhile, other researchers continue to find new applications. In January, the Novasni Labelatory showed that distillation work well for the training models of formal reasons, which use multistage “thinking” to better respond to complex questions. The laboratory says that his fully open source Sky-T1 model costs less than $ 450 for training, and achieved similar results in a much larger model of open source. “We were truly surprised so good distillments in this environment,” Dacheng Lee said, Berkeley doctoral student and a judge in the Novaskajski team. “Distillation is a fundamental technique in AI.”

Original story Reprinted with permission from How many magazines, Editorial independence Simons Foundation Whose mission is to improve public understanding of science covering research development and trends in mathematics and physical and life sciences.