The new algorithm makes it faster to find the shortest paths

Original version from This story appeared in Quanta magazine.

If you want to solve a trimmable problem, it often helps organize. You might, for example, broke the problem on pieces and get rid of the easiest pieces first. But this type of sort has costs. You can finish too much time you put pieces in order.

This dilemma is especially relevant to one of the most informatics in Informatics: Finding the shortest path from a certain starting point in the network on any other point. It is like a Suped-Up version of the problem you need to solve every time you move: learning the best path from your new home for work, gym and supermarket.

“The shortest trails are a wonderful problem with which anyone in the world can connect with,” said Mikkel Thorup, a computer scientist at the University of Copenhagen.

Intuitively, it should be easiest to find the shortest path to nearby destinations. So, if you want to design the fastest possible algorithm for the shortest problems, it seems to be reasonable to start finding the closest point, and then next closest and so on. But to do this, you have to understand multiple times which point closest. You will arrange points per distance as you go. There are basic speed limitations for any algorithm that follows this approach: you can’t go faster than the time to be sorted.

Fourty years ago, researchers who design the shortest algorithms of the trails have launched this “sorting barrier”. Now the researcher team designed a new algorithm that breaks him. It does not sort, and works faster than any algorithm that makes it.

“The authors were funny in thoughts that they could break this barrier,” Robert Tarjan said, a computer scientist at the University University. “It’s an amazing score.”

Knowledge limit

To analyze the shortest paths problematic, researchers use language charts-networks or nodes, connected lines. Each connection between nodes is marked with a number called its weight, which can represent the length of that segment or time required to switch. There is usually a lot of routes between any two nodes, and the shortest one is whose weight is added to the smallest numbers. Given the chart and specific “source” node, the goal of the algorithm is to find the shortest path to each other node.

The most famous shortest algorithm, was designed by the Pionic Computer Scientist Edsger Dijkstra in 1956. year, the source begins and works outside step by step. It is an effective approach, because knowledge of the shortest road to the nearby nodes can help you find the shortest paths to distant. But because the end result is the sorted list of the shortest trails, the sort barrier sets the fundamental limit on how fast the algorithm can run.

Mystery about how to form quasi-cardistal

Original version from This story appeared in How many magazines.

Of their discovery in 1982. year, exotic materials known as quasistans are worried physicists and chemists. Their are atominated in the chains of pentagon, decoctions and other forms to form patterns that never repeat. These forms seem to defeat with physical laws and intuition. How can atoms may “know” how to form elaborate arrangements that do not have non-advanced understanding of mathematics?

“Quasikristists are one of those things that as a scientist, when you first teach about them,” It’s crazy, “Wenhao Sun, a scientist at Michigan University.

Recently, however, Poph’s results peeled out some of their secrets. In one study, the sun and collaborators adjusted the method for the study of crystal to determine that at least some quasi-unskistal stable – their atoms will not be placed in lower energy. This finding helps explain how and why qualistic forms. The second study gave a new way of engineering quater and observe them in the process of formation. And the third research group reported the previously unknown properties of these unusual materials.

Historically, quasi-cards are causing creation and characterization.

“There is no doubt that they have interesting real estate,” Sharon Glotzer said, a calculus physicist who deals at the University of Michigan, but was not involved in this paper. “But we can make them largely, to save them, at the industrial level-[that] He didn’t feel, but I think this will start to show us how to do it reproducibly. “

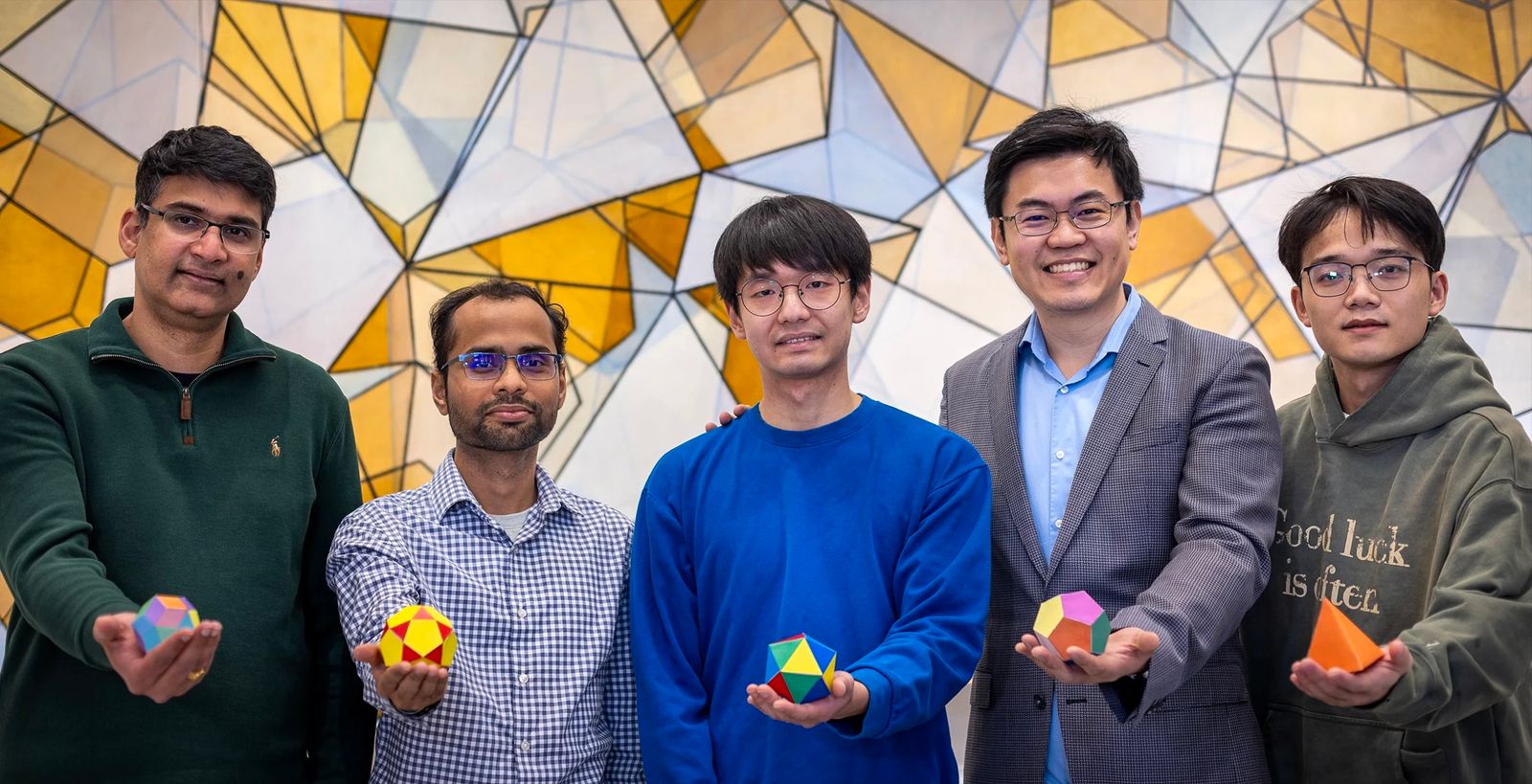

Vikram Gavini, Sambit Das, Wooohyeon Baek, Wenhao Sun and Shibo Tan Hold examples of geometric shapes that appear in quasi-cards. The researchers of the University of Michigan showed that at least some qualities are thermodynamically stable.

Photo: Marcin Szczepanski Michigan Engineering‘Forbidden’ symmetry

Almost a decade before the Israeli physicist Dan Shechtman revealed the first examples of quasi-card in the laboratory, the British Mathematical Physist Roger Penrose thought of “quasiperiodic” – but not completely repeating the samples that would manifest in those materials.

Penrose developed tile sets that could cover an infinite plane without gap or overlap, in the forms that do not, and cannot, repeat. Unlike testers made from triangles, rectangles and hexagonal shapes that are symmetrical over two, three, four or six-axis and which space in periodic samples – a penetrating sheath have a “prohibited” peripruk symmetry. Tiles make pentagon arrangements, and pentagonies cannot be firmly fit next to each other to sail the aircraft. Thus, while tiles align along with five axes and tests infinitely, different parts of the sample look similar; Exactly repeat is impossible. Quasiperiodic penrose bodies made title Scientific american 1977, five years before they jumped out of pure mathematics in the real world.

What is thirst? | Wired

“There are only a few things that are so important for your body that there is a completely innate drive to get it if you fall into a shortage,” Knight said. “Oxygen, food, water and sodium.”

However, animals like us do not perceive salt desire as powerful, controlling driving as well as oxygen, food and water. Sensors signal the levels of salt in the brain; In addition to OVLT and SFO, sensors in the heart reveal stretching atric and ventricular. But there is no analog salt pango when we need, the way the stomach for food or scratches is cramped for water. Instead, the need to consume salt is mediated by tastes and brains of awards. “The taste of salt is bimodan,” Knight said. “It’s good taste in low doses; at high doses tastes disgusting, like drinking seawater.”

Imagine the urge to eat a large bag of potatoes chips. If the body needs salt, these chips will cause a pleasant dopamine to flood the brain. If the body doesn’t need salt, that dopamine drop disappears. “It is quite learning strengthening,” Yuki of the eye, neurobiologist at the California Institute of Technology that studies how the body maintains homeostasis. “More dopamine means repeated behavior.”

Everybody’s thirst is different

Scientists who follow the river collect data, and then have a choice whether to act on their findings. Similarly, only because the brain measures the blood of sodium does not mean that it has to act on that information.

Take thirteen trone squirrels Elena Grachev. Gracheva, neurophysiologist at Chinese school school, studies these rodents, originally with North American lawns, to understand how concrete regions of the brain control thirst. Thirteen lined ground squirrels is an ideal model for this, she said, because he hibernates for more than half a year, without eating or drinking. “They’re like a monk,” Grachev said. “They don’t go outside for eight months. They don’t have water in their underground burr.” How would they not thirst?

Elena Gracheva (left) followed that the brain of thirteen-coated groundwide squirrels (right) suppress their thirst during hibernation.

The courtesy of Graheva Laboratory

CC-by 2.0 via Wikimedia Commons

It’s not that squirrels don’t need water. They do it. Their bodies cry for that. But according to Grac’s survey, their brain ignores the body signals during hibernation.

In mammals, a drop in blood water levels (which means the simultaneous increase in salt concentration, all things that are equal) run two related processes. The hypothalamus pump hormone vasopressin, which sends kidneys to keep water, not release it as urine, and SFO begins with a drinking thirst. However, while the ground squirrels are hized, their vasopresinal levels are jumping, but the animal still does not drink. “The vasopressine circuit was normal, but the thirst of neurons were regulated,” Grachev said. “These two tracks are unlit.” The body tries to keep the water it has, but it does not work would not be consumed more.

The logic of the disturbed circle is extremely powerful. “Even if you wake them up in the middle of hibernation, they won’t drink,” Grachev said.

In the basic network that Graheva studies in squirrels is universal in mammals, to and including people. But that same neurological logic does not lead to the same behaviors. People drink a glass of water when they are thirsty. Cats and rabbits generally lead water from the food they eat. Camels can burn their fatty water stores (which produce carbon dioxide and water), but they also consume gallons and keep it in the stomach when they need it later. Marine Vidri can drink ocean water and excrete urine that is solution from water they swim; They are the only sea mammals who are actively working.

As each animal manages water and salt specializes in its ecosystem, lifestyle and selective pressures. Question “What does it mean to be thirsty?” There is no one answer. Every thirst in your own way.

The original story for a re-written permission from Quanto Magazine, editorial independence of the Simons Foundation whose mission is to improve public understanding of science by attending the development of research and physical and life sciences.

Distillation can make and models smaller and cheaper

Original version from This story appeared in How many magazines.

Chinese and the Deepseek company announced Chatbot earlier this year called R1, which pulled huge attention. Most focused on the fact that the relatively small and unknown company said that it was built by Chatbot who has monitored the effects of those from the world’s most famous companies and the use of partnerships and expenses. As a result, the stocks of many Western technological companies fell; Nvidia, which sells chips that run the leading AI models, lost more stock values in one day than any company in history.

Some of the attention involved the accusation element. Sources are alleged that Deepseek received, without permission, knowledge from own models O1 O1 using the technique known as distillation. Much of the news frame this opportunity as a shock industry and, which implies that Deepseek revealed a new, more efficient way to build ai.

But the distillation, called knowledge, is a widely used tool in AI, the topic of computer science research that returns to a decade and a tool that is used by large technical companies on their own models. “Distillation is one of the most important tools that companies today have models to make more efficiently,” said Enric Boix-Adsera, researcher who studying distillation at the School of Wharton University in Pennsylvania.

Dark knowledge

The idea of distillation began with paper for 2015. year by three researchers on Google, including Geoffrey Hinton, the so-called Kum AI and 2024 Nobel Laureata. Then the researchers often run the Ensemble models – “Many models are glued together,” said Oriol Vinyals, the main scientist on Google Deepmind and one of the authors of paper – to improve their effect. “But it was incredibly awkward and expensive to start all models in parallel,” Vinyals said. “We intrigued with the idea of distilling to give it to one model.”

Researchers thought I could make progress solving a significant weak point in machine learning algorithms: wrong answers were considered as bad, no matter how wrong it might be. As part of the image classification, for example, “confusing dog with a fox penalized in the same way as a confusing dog with pizza,” Vinyals said. The researchers suspected that the Ensemble Models contain information that the wrong answers were less bad than others. Perhaps a smaller model “Student” could use data from the Great “Model” to understand the categories faster, which should have sorted images in. Hinton called this “dark knowledge”, referring to the analogy of cosmological dark matter.

After discussing this possibility with Hinton, Vinyals has developed a way to get a large teacher model to transfer more information on the image categories to a smaller student model. The key is in the household in “soft goals” in the teacher model – where the probabilities are assigned for each possibility, not solid answers. One model, for example, calculated that there is 30 percent of the chance that the picture showed a dog, 20 percent to showed the cat, 5 percent to showed a cow and 0.5 percent to show the car. By using these probabilities, the teacher model effectively discovered the student that dogs are quite similar to cats, not so different from cows and quite different from cars. The researchers found that this information would help the student learn how to recognize images of dogs, cats, cows and cars more efficiently. A large, complicated model could reduce on a skinny one with barely any loss of accuracy.

Explosive growth

The idea was not the current hit. The work was rejected from the conference, and Vinyals, discouraged, turn to other topics. But Distillation arrived in an important moment. Arms, engineers have discovered that larger data on training were fed into neural networks, the more efficiently became these networks. The size of the model soon exploded, as well as their possibilities, but the costs of leaders who climbed into step with their size.

Many researchers turned into distillation as a way to make smaller models. Google Researchers in 2018. years presented a powerful language model called Bert, which company soon began to use to help search web search. But Bert was great and expensive to run, so the next year, other developers distilled a minor version reasonably named Distilbert, who became a job and research. Distillation gradually became ubiquitous, and is now being offered as a company service such as Google, Openai and Amazon. Original distillation paper, which is still published only on the Arxiv.org Pretprint server, is now listed more than 25,000 times.

Given that distillation requires access to the interior of the teaching model, it is not possible for the third party to be annoyed in order to begin data from the closed code model such as O1 O1, because they were considered O1, because O1 O1 was considered. This was said, the student model could continue to learn much from the teacher model only through the encouragement of teachers with certain issues and the use of responses for trained models – almost Socratic access to distillation.

Meanwhile, other researchers continue to find new applications. In January, the Novasni Labelatory showed that distillation work well for the training models of formal reasons, which use multistage “thinking” to better respond to complex questions. The laboratory says that his fully open source Sky-T1 model costs less than $ 450 for training, and achieved similar results in a much larger model of open source. “We were truly surprised so good distillments in this environment,” Dacheng Lee said, Berkeley doctoral student and a judge in the Novaskajski team. “Distillation is a fundamental technique in AI.”

Original story Reprinted with permission from How many magazines, Editorial independence Simons Foundation Whose mission is to improve public understanding of science covering research development and trends in mathematics and physical and life sciences.