Protester against trumping should target ‘naive’ USU and AI

“It was so important to come today, because there is such a wide combination of groups. Type [Trump] At each level, it should not be in power, he must not be allowed in front of any TV camera, let alone as a president of the United States, and to visit him here – that I mostly believe – this is simple. Really not included. “

Like other protesters, Sarah quickly referenced the end right in London last Saturday, which attracted over 100,000 people and exposed to deepening divisions within the United Kingdom, as a reason for the protest.

“To give this guy here for our government and our royal family, such a fucking serious mistake,” she says, which describes Trump as a “epithet of hatred and violence.” “We’re angry. We’re all really angry.”

Photo: Natasha Bernal

Clive Teague, which was in protests who supports the creation in the extinction of Waverley and the border in Surrey, says that this AI contract is one of the many things that the government does not work on evil. Trump “is there [in Windsor] Because we’re here. If we weren’t here, he would come to the mall. We’re here to stop him. “

Teague says it is not against the use of AI, as long as it works with new, pure energy sources, not existing powers. “We cannot continue to burn fossil fuels to continue burying in these data centers, as they will be looking for requests for the rest of the world.” This opinion was echoed by other ecological groups, such as Greenpeace, which are subject to huge data centers approved without what it considers that the proper assessment of the impact on local water systems and the electricity network.

“Greenpeace does not oppose AI,” said the main scientist of Greenpeace Great Britain Doug Parr in a written wiring statement. “Multibillion-Pounds-covers should be forced to take on a responsibility for the solution, whether they are cooling a lot of water or running on a new, clean renewable power instead of just a fan for just fans for the AI sector.”

RFK JR vaccine plate votes with their own proposal to require recipes for COVID-19 shots

In the second vote, advisors are recommended to provide language to the risks of cracking to the information sheet of the vaccine that is already needed by law.

The focus of the Committee on the Cikcient-19 vaccines reflects Kennedy’s long-wide suspicion of them. Since the laying in February, Kennedy has canceled a half-billiard dollars in the research of the mrne vaccine and has completed a large contract with the Moder, one of the manufacturers of the vaccine, for work on a pandemic bird vaccine.

During the meeting on Friday, the CDC scientists presented extensive data on the safety and efficiency of the covefic vaccines. They also explained in detail that the agency follows the cavity hospitalizations and said that the Agency had a “rigorous and standardized process” in order to determine whether hospitalizations were classified as COVID-19.

During the part of the meeting of the meeting, members of the Board gave several unfounded receivables. Robert Malone, a former MRNA researcher who spread the disinformation of the vaccine, was examined whether there was actually proof of protecting the disease from the bone. “Are there well-defined, characterized correlate protection for Covid, yes or no?” He was looking for.

Cody Meissner, Pediatrician on Dartmouth College, replied that there is a “reasonable measurement of neutralization or binding antibodies that are correlated with the protection against symptomatic infection in the first few months” after vaccination.

At one point, the Blackburn, Pharmacist, the Pharmacist in the Committee, was questioned whether a conjugal vaccine could be associated with the diagnosis of his mother’s pulmonary cancer, which took place two years after the Kovin vaccine. She said that aware of another four people in his little native city was diagnosed with the same type of cancer. “Does he refer to the vaccine?” She asked.

In tense exchanges on potential bonding bakers, some ACIP members have pressed Pfizer about eight gender damage that occurred in the group of pregnant women and two births that occurred in non-nationality. Alejandra Gurtman, which clinician of the clinical research and the development of the Pfizer, answered that these rates are comparable to the rates of innate carnivals seen in the general population.

Carol Hayes, a connection with the American Faculty of Nurses who were present during the meeting, explained that most gender shortcomings occur during the first quarter of pregnancy, and the cited study were tube from 12 to 24 weeks of pregnancy.

At a meeting on Friday, the Committee also reversed the decision he made only the day before. On Thursday, counselors voted that no longer recommended combined delinquents, pumps, varicella (MMRV) and Varicel (MMRV) for children under 4 years. However, it voted to maintain the coverage of that vaccine through the children’s children’s children and those without insurance. On Friday, they voted that the program should not, in fact, cover.

On Friday, advisors also voted 11 to one lease in favor of the decision on whether to delay the dose of birth vaccines of hepatitis B to a month. The Committee discussed in detail on Thursday, although it was unclear why the Committee was asked to view potential changes in the USA, because the vaccine against hepatitis B has been given newborns in the US since 1991. years.

Infants get the vaccine before they leave the hospital because the virus can be transferred from the infected mother on the baby during birth. Hepatitis B is a serious liver infection that can lead to cirrhosis and cancer. The vaccine is very effective in preventing infection in newborns.

Chari Cohen, President of the Hepatitis B Foundation, says there is no scientific reasoning for disposing of hepatitis B vaccines for a month after birth, and it takes care of the hepatitis B infection if the panel is eventually recommended by delay immunization.

“We’ll probably see more babies and small children who get infected,” Cohen says. “From a public health infrastructure perspective, we are worried that this risk-based approach will miss the prevention of baby infections born in infected moms.”

Up to 16 percent of HBV-positive pregnant women are not tested for hepatitis B, so screening does not understand all infected mothers.

“We don’t understand the motivation or explanation of this discussion,” says Cohen.

Greet the winners of Nobel Prize 2025. Ig

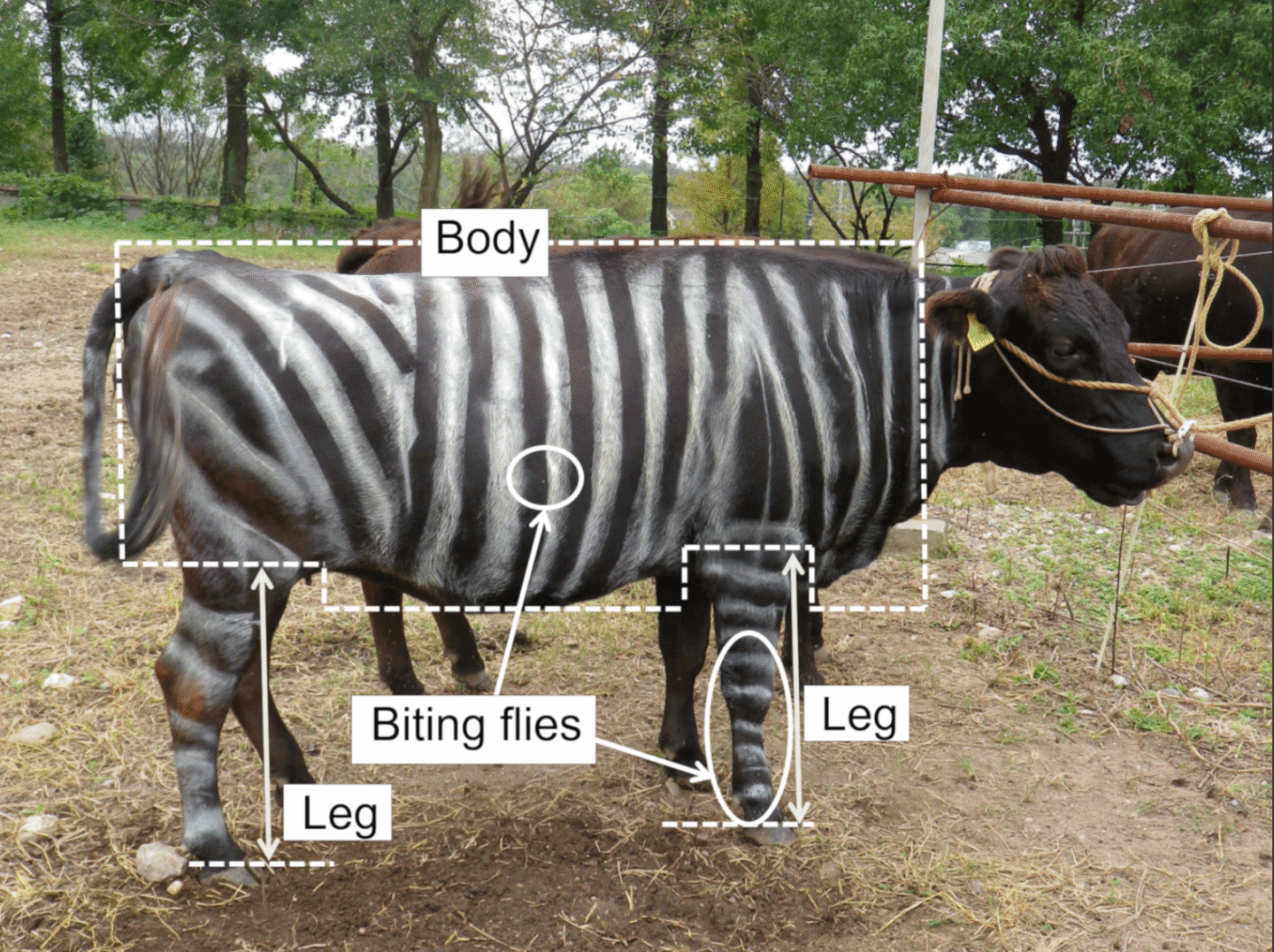

Does alcohol boost Foreign language fluids? Do Western African Lizards have the preferred pizza dressing? And can I paint a cow with zebra stripes help refuse to bite fly? These and other unusual research issues were honored tonight in the virtual ceremony to publish 2025 recipients of the annual Ig Nobel Prize. Yes, again it is the time of the year, when it comes to serious and stupid Converge to science.

Founded in 1991, IG Nobels is a well-intentioned parody of Nobel Prize; They honor “the achievements that first laugh and then make them think.” The solemn campomatic prizes contains miniature operas, scientific demonstrations and lectures 24/7 in which experts must explain their work twice: once in 24 seconds, and others in just seven words.

Speaking for acceptance are limited to 60 seconds. And as the motto implies, research that honors can look ridiculous at first glance, but that doesn’t mean it was deprived of scientific merit. In weeks, after the ceremony, winners will also give free public conversations, which will be published on an amazing internet website.

Without further ADO, here are the winners of the 2025 Ig Nobel Prize.

Biology

Photo: Tomoki Kome et al., 2019

Quotation: Tomoki Kazato Oishi, Yasushi Matsubara, Yoshihiko Fukushima, Say Sato, Junichi Ueda, and Katsutoshi cinema, for their experiments to find out whether the cows-painted zeba-like cows can avoid being bitten by flies.

Every dairy farmer can tell you that they bite the flies a pesticated plant for the animal herd, which is why one often sees the cows and jerk their skin – desperately trying to enchant themselves. There is an economic cost, because it causes livestock to graze and feed less, a bed for a short time and start pissing together, which increases thermal stress and risk animal injury. This results in a less milk yield for dairy cows and fewer beef yields from the feedlot cattle.

Do you know who doesn’t bite a biting fly? Zebra. Scientists have long discussed the function of a characteristic black and white pattern of zebras. Is that for camouflage? Confusing potential predators? Or is it to reject those boring flies? Tomoki to whom et al. He decided to put the latter hypothesis on the test, paint zebra railroads on the six pregnant women of Japanese black cows in the Aichi agricultural research center in Japan. They used lacquered waters to washed after a few days, so the cows could turn into three different groups: zebra stripes, only black stripes or no strips (as control).

Distillation can make and models smaller and cheaper

Original version from This story appeared in How many magazines.

Chinese and the Deepseek company announced Chatbot earlier this year called R1, which pulled huge attention. Most focused on the fact that the relatively small and unknown company said that it was built by Chatbot who has monitored the effects of those from the world’s most famous companies and the use of partnerships and expenses. As a result, the stocks of many Western technological companies fell; Nvidia, which sells chips that run the leading AI models, lost more stock values in one day than any company in history.

Some of the attention involved the accusation element. Sources are alleged that Deepseek received, without permission, knowledge from own models O1 O1 using the technique known as distillation. Much of the news frame this opportunity as a shock industry and, which implies that Deepseek revealed a new, more efficient way to build ai.

But the distillation, called knowledge, is a widely used tool in AI, the topic of computer science research that returns to a decade and a tool that is used by large technical companies on their own models. “Distillation is one of the most important tools that companies today have models to make more efficiently,” said Enric Boix-Adsera, researcher who studying distillation at the School of Wharton University in Pennsylvania.

Dark knowledge

The idea of distillation began with paper for 2015. year by three researchers on Google, including Geoffrey Hinton, the so-called Kum AI and 2024 Nobel Laureata. Then the researchers often run the Ensemble models – “Many models are glued together,” said Oriol Vinyals, the main scientist on Google Deepmind and one of the authors of paper – to improve their effect. “But it was incredibly awkward and expensive to start all models in parallel,” Vinyals said. “We intrigued with the idea of distilling to give it to one model.”

Researchers thought I could make progress solving a significant weak point in machine learning algorithms: wrong answers were considered as bad, no matter how wrong it might be. As part of the image classification, for example, “confusing dog with a fox penalized in the same way as a confusing dog with pizza,” Vinyals said. The researchers suspected that the Ensemble Models contain information that the wrong answers were less bad than others. Perhaps a smaller model “Student” could use data from the Great “Model” to understand the categories faster, which should have sorted images in. Hinton called this “dark knowledge”, referring to the analogy of cosmological dark matter.

After discussing this possibility with Hinton, Vinyals has developed a way to get a large teacher model to transfer more information on the image categories to a smaller student model. The key is in the household in “soft goals” in the teacher model – where the probabilities are assigned for each possibility, not solid answers. One model, for example, calculated that there is 30 percent of the chance that the picture showed a dog, 20 percent to showed the cat, 5 percent to showed a cow and 0.5 percent to show the car. By using these probabilities, the teacher model effectively discovered the student that dogs are quite similar to cats, not so different from cows and quite different from cars. The researchers found that this information would help the student learn how to recognize images of dogs, cats, cows and cars more efficiently. A large, complicated model could reduce on a skinny one with barely any loss of accuracy.

Explosive growth

The idea was not the current hit. The work was rejected from the conference, and Vinyals, discouraged, turn to other topics. But Distillation arrived in an important moment. Arms, engineers have discovered that larger data on training were fed into neural networks, the more efficiently became these networks. The size of the model soon exploded, as well as their possibilities, but the costs of leaders who climbed into step with their size.

Many researchers turned into distillation as a way to make smaller models. Google Researchers in 2018. years presented a powerful language model called Bert, which company soon began to use to help search web search. But Bert was great and expensive to run, so the next year, other developers distilled a minor version reasonably named Distilbert, who became a job and research. Distillation gradually became ubiquitous, and is now being offered as a company service such as Google, Openai and Amazon. Original distillation paper, which is still published only on the Arxiv.org Pretprint server, is now listed more than 25,000 times.

Given that distillation requires access to the interior of the teaching model, it is not possible for the third party to be annoyed in order to begin data from the closed code model such as O1 O1, because they were considered O1, because O1 O1 was considered. This was said, the student model could continue to learn much from the teacher model only through the encouragement of teachers with certain issues and the use of responses for trained models – almost Socratic access to distillation.

Meanwhile, other researchers continue to find new applications. In January, the Novasni Labelatory showed that distillation work well for the training models of formal reasons, which use multistage “thinking” to better respond to complex questions. The laboratory says that his fully open source Sky-T1 model costs less than $ 450 for training, and achieved similar results in a much larger model of open source. “We were truly surprised so good distillments in this environment,” Dacheng Lee said, Berkeley doctoral student and a judge in the Novaskajski team. “Distillation is a fundamental technique in AI.”

Original story Reprinted with permission from How many magazines, Editorial independence Simons Foundation Whose mission is to improve public understanding of science covering research development and trends in mathematics and physical and life sciences.